File Data Type was introduced somewhere around late 2019. At that time, it was only available for Canvas App and Power Automate / Flow, and with subsequent updates, it was then added for Model Driven App as well.

The File Data type while creating the column à

By default, the file size is 32,768 KB.

- Min – 1 KB

- Maximum – 131072 KB

Let us keep it as 2000 KB and save it.

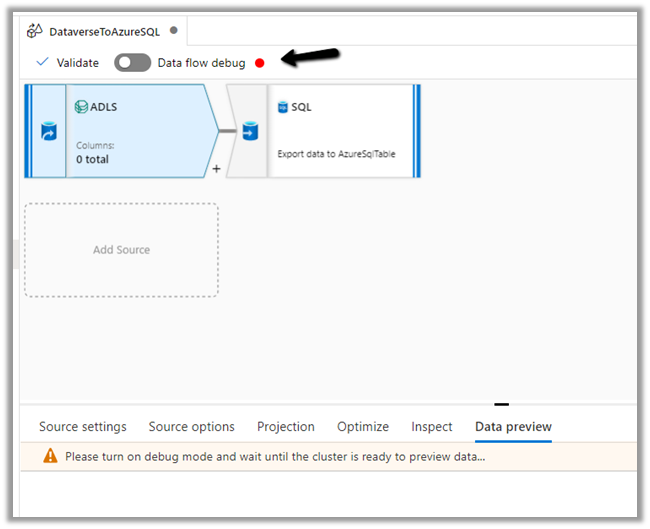

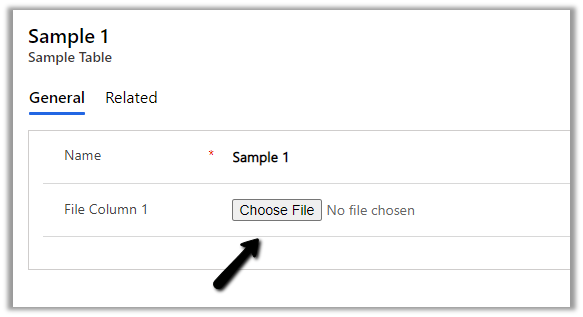

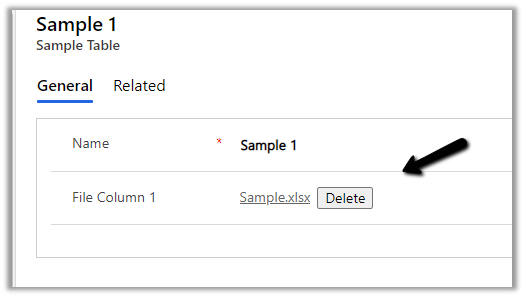

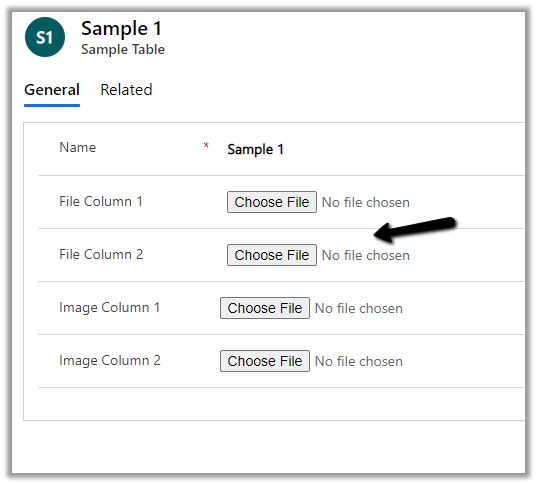

Here we have added the field in the form.

We need to save the record before the field is enabled.

On trying to upload a file more than 2000 KB size, the max size, we specified while creating the column, will give the below error.

“The upload failed for File Column 1. Please try again or contact your admin.”

The Maximum file size property cannot be updated after the column is created.

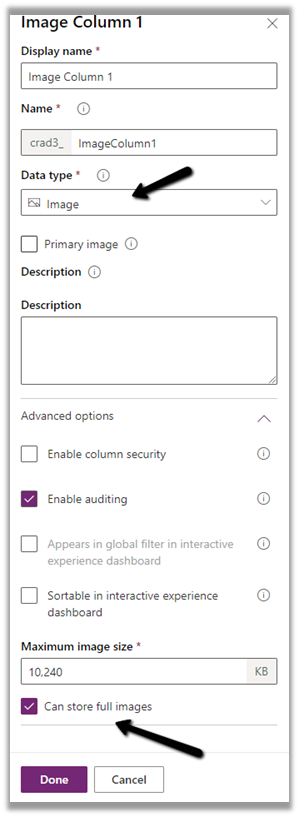

Similarly, we have the Image data type.

The default size is 10240 KB.

- Min – 1 KB

- Max – 30720 KB

If the image size is more than specified, we will get the below error message

The upload failed for Image Column 2. Please try again or contact your admin.

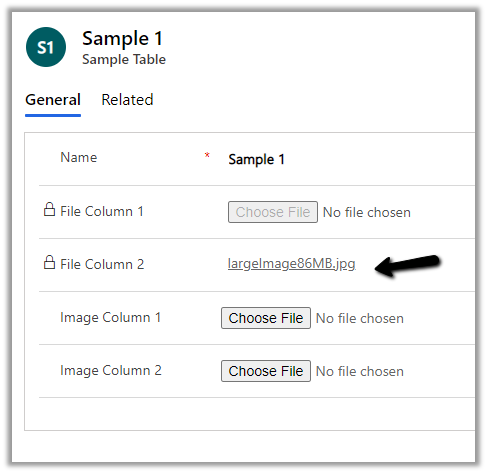

Below we have added a new file type column named File Column 2 with a maximum size of 100 MB.

With 2020 Release Wave 2, there is no need to specify file chunking while uploading a file of 16 MB or more, by dividing file data blocks of 4 MB or less through API.

Earlier à

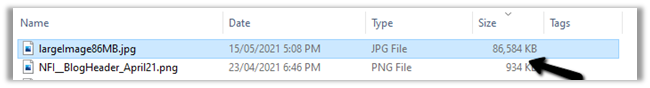

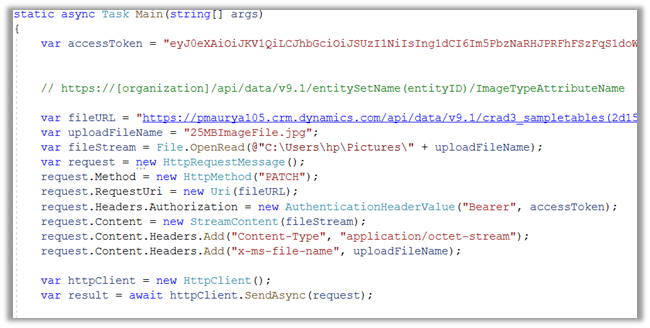

Below is the sample code we have used to upload a file of size 86 MB.

The file uploaded à

Now let us look at downloading part.

The URL that can be used are

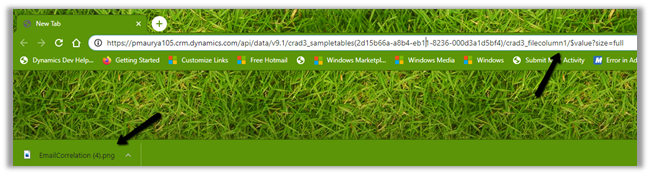

Byte Array formatà

https://%5Borganization%5D/api/data/v9.1/entitySetName(entityID)/fileTypeAttributeName/$value?size=full

Base64 String formatà

https://[organization]/api/data/v9.1/entitySetName(entityID)/fileTypeAttributeName/?size=full

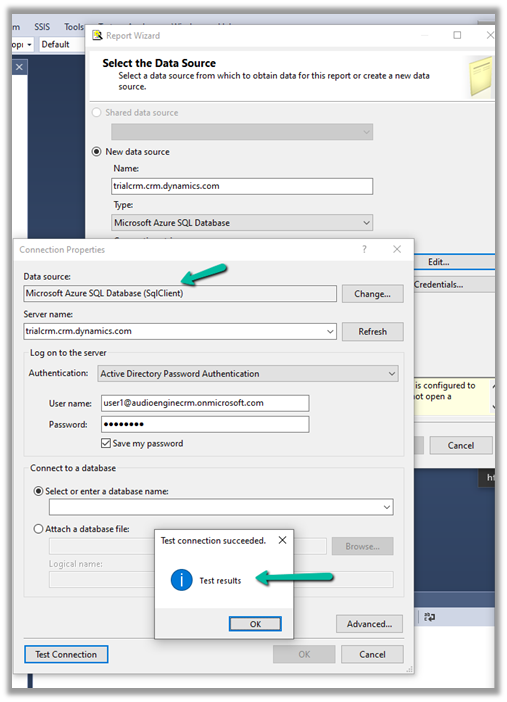

Let us try the URL in the browser for both below File column 1 and 2.

It downloads the file for File Column 1à

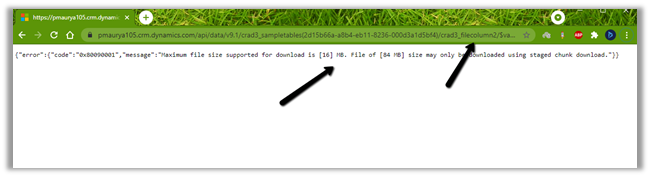

In case of File Column 2, we get the below error message as its size exceeds 16 MB à

{“error”:{“code”:”0x80090001″,”message”:”Maximum file size supported for download is [16] MB. File of [84 MB] size may only be downloaded using staged chunk download.”}}

Base 64 Format ->

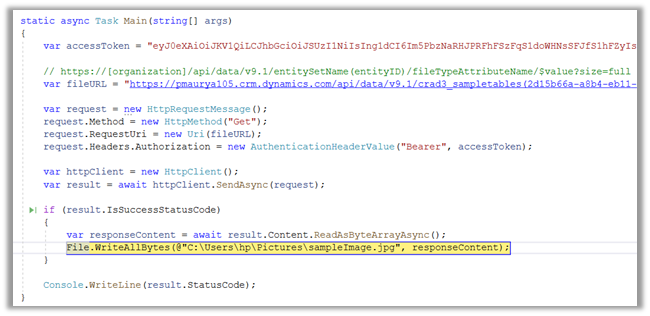

Below is the sample C# code to download the file if the size is less than 16 MB

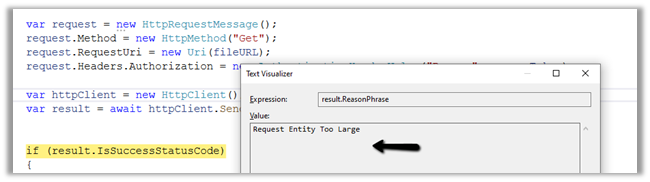

If it is more than 16 MB we will get the below error à Request Entity Too Large

For Image Data Type, for uploading the full image > 16 MB (without dividing into a smaller chunk of less than 4 MB), we can use the below source code. It is the same as for the file data type.

For download, we can download either the thumbnail or full image (<= 16 MB) using the same source code used for file download.

For thumbnail –

GET [Organization URI]/api/data/v9.1/entity(recordGuid)/myentityimage/$value

For full image –

GET [Organization URI]/api/data/v9.1/entity(recordGuid)/myentityimage/$value?size=full

For more than 16 MB we need to use chunking.

Get all the details below à

https://jukkaniiranen.com/2019/12/why-would-you-store-images-and-files-in-cds/

https://debajmecrm.com/new-data-type-filein-cds-all-you-may-need-to-know-about-it/

Hope it helps..