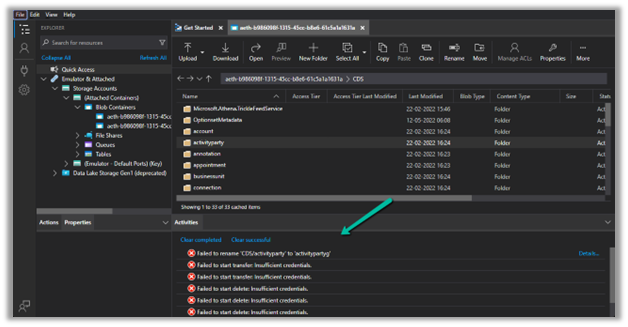

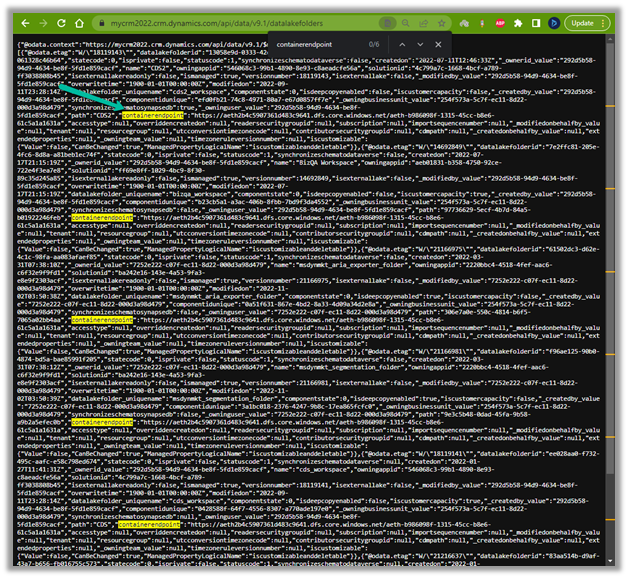

Recently in one of our Plugins, which was using Azure.Storage.Blobs and Azure.Storage.Common libraries to move attachments from notes to Azure Blob Storage suddenly started throwing the below exception. The Plugin had been working fine and had been deployed long back to the production environment.

System.TypeInitializationException: The type initializer for ‘Azure.Response’ threw an exception. —> System.IO.FileNotFoundException: Could not load file or assembly ‘System.Memory.Data, Version=1.0.2.0, Culture=neutral, PublicKeyToken=cc7b13ffcd2ddd51’ or one of its dependencies. The system cannot find the file specified.

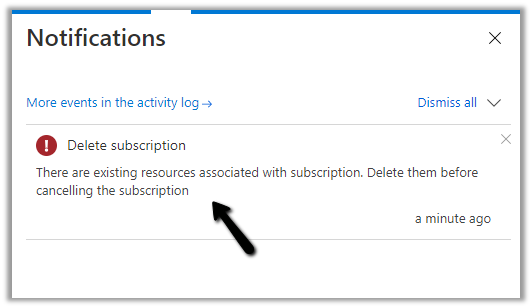

We raised the Microsoft Support for it and were informed that the reason for this was there was a platform update over that particular weekend to stop loading the “System.Memory.Data” assembly on the plugin server. And as we hadn’t included that assembly in our package (ILMerge), we started getting the exception.

Also as per Microsoft Docs

So the quick fix at that time was to include (set Copy Local as True) for that System.Memory.Data along with Azure assemblies.

Also now we can look into using Plugin Package to package the dependent assemblies.

Hope it helps..

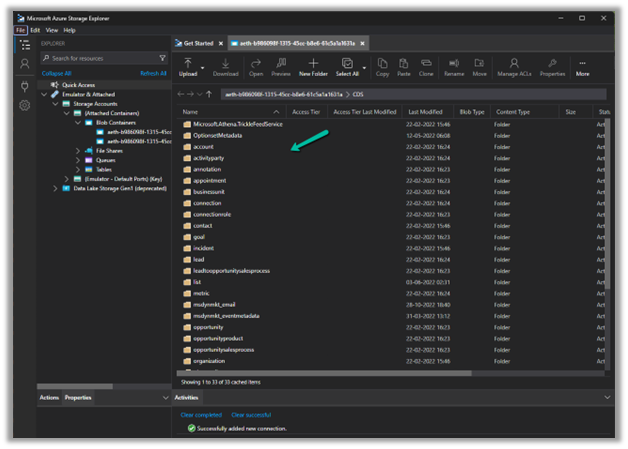

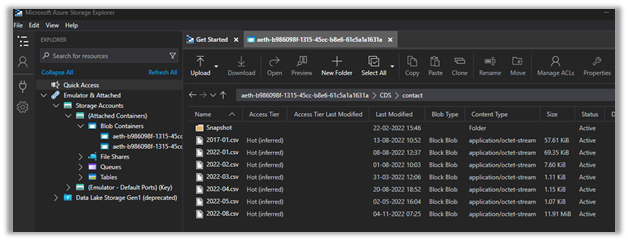

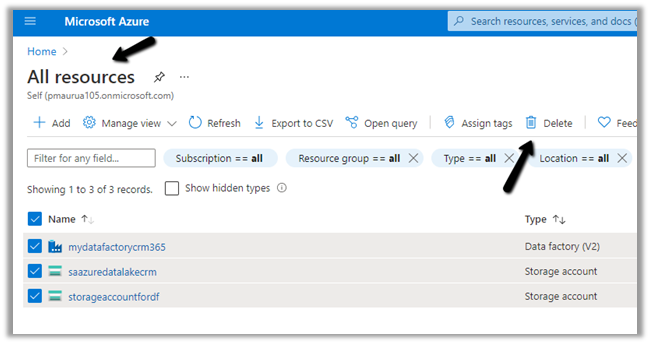

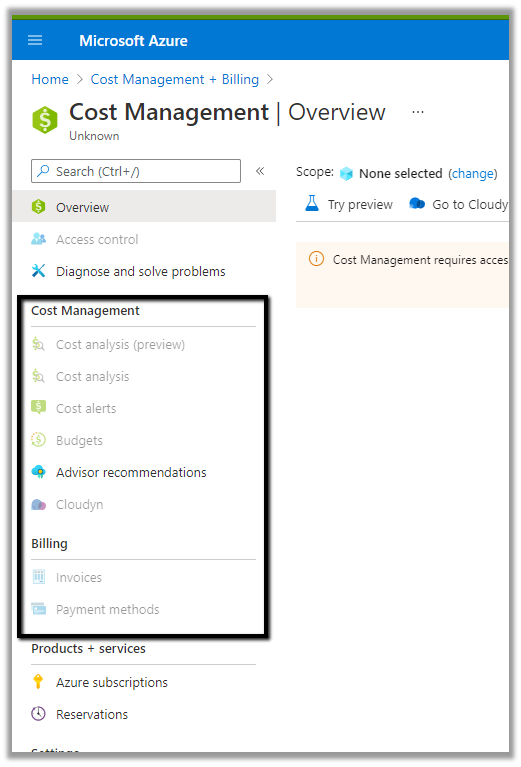

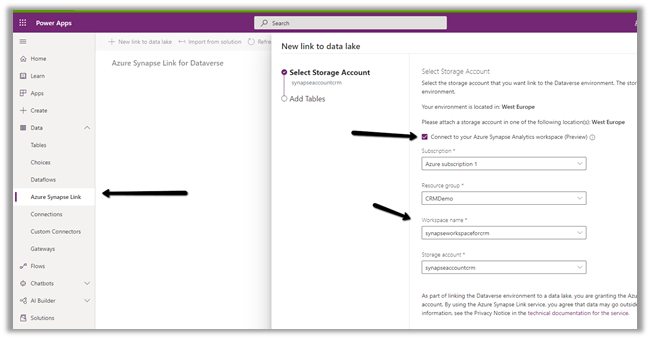

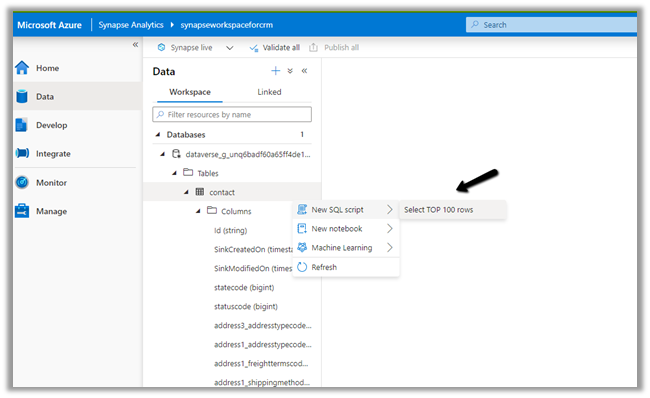

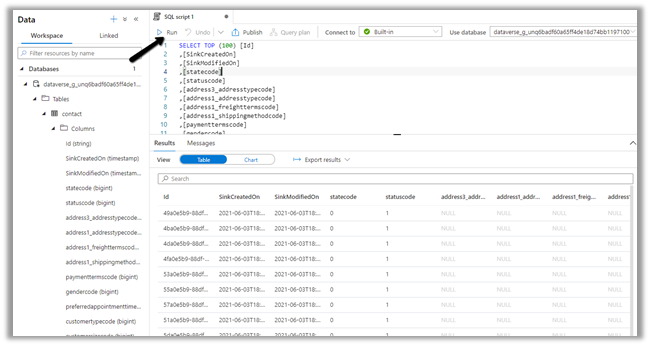

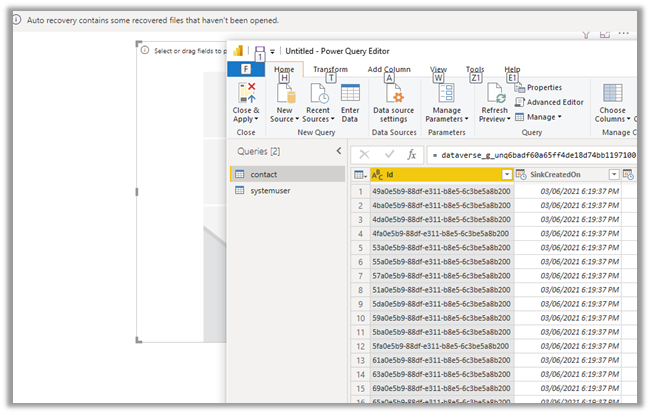

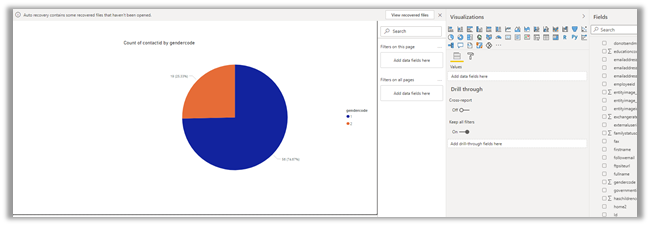

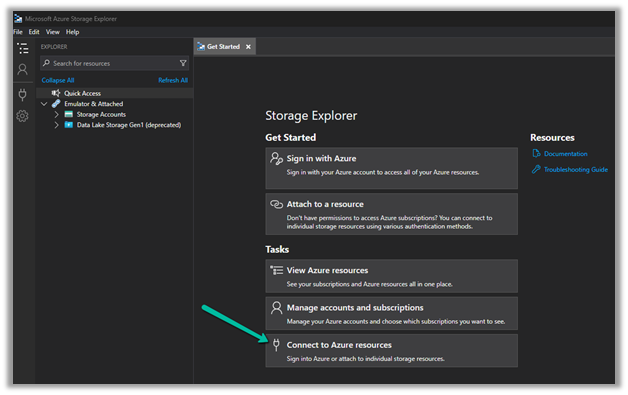

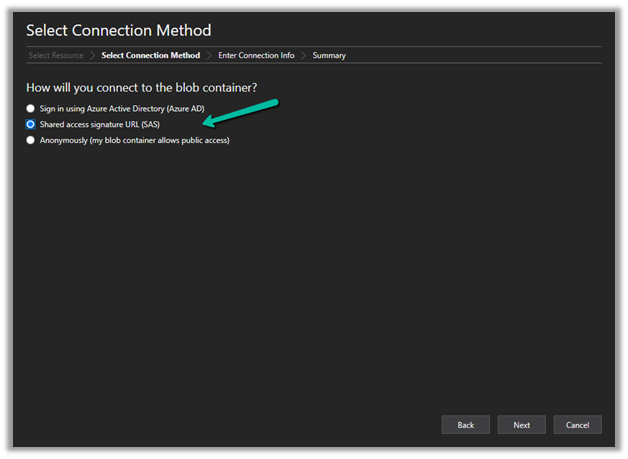

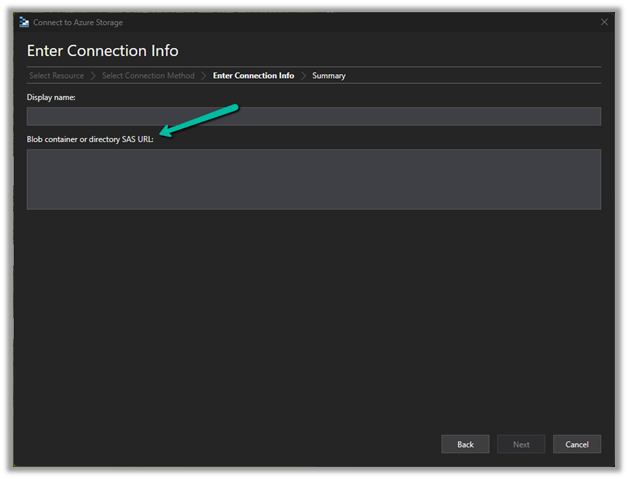

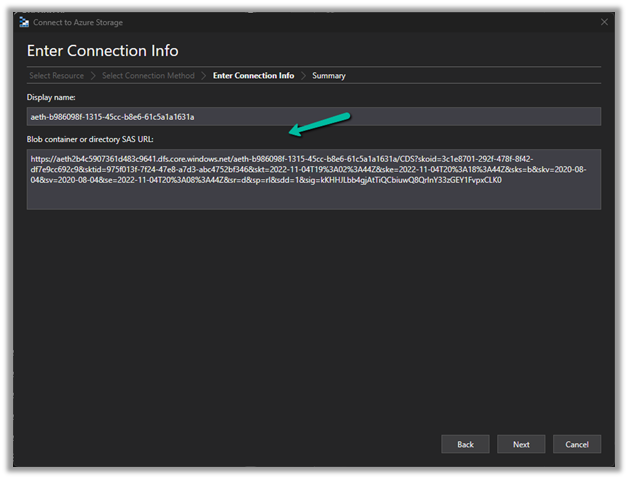

Now we have access to the storage

Now we have access to the storage