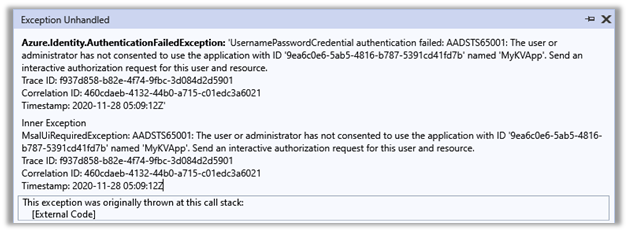

While trying to access Azure resources using UsernamePasswordCredential credential we were getting the below error

Azure.Identity.AuthenticationFailedException: ‘UsernamePasswordCredential authentication failed: A configuration issue is preventing authentication – check the error message from the server for details.You can modify the configuration in the application registration portal. See https://aka.ms/msal-net-invalid-client for details. Original exception: AADSTS7000218: The request body must contain the following parameter: ‘client_assertion’ or ‘client_secret’.

Trace ID: ef6c9e2b-862a-4a8b-9519-9a9072d23301

Correlation ID: 5f9bae95-e45a-4da5-b27c-ad9704e7334e

Timestamp: 2020-11-28 05:58:05Z’

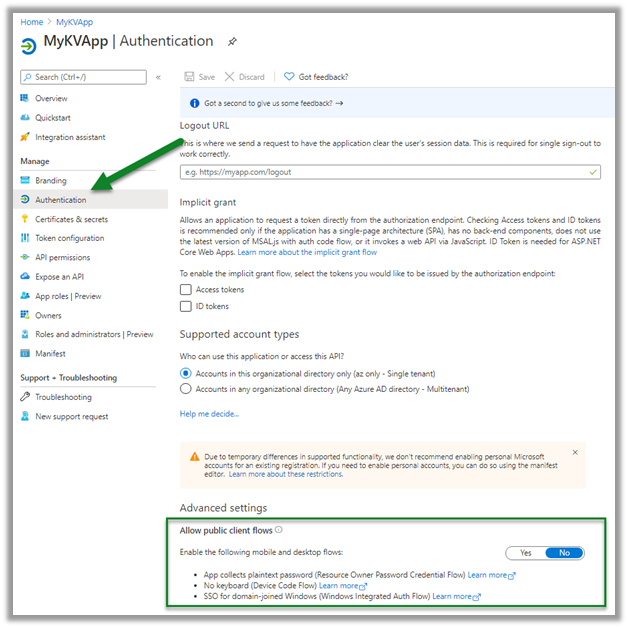

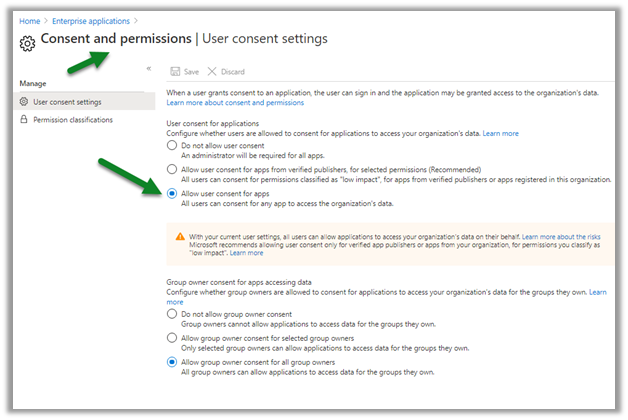

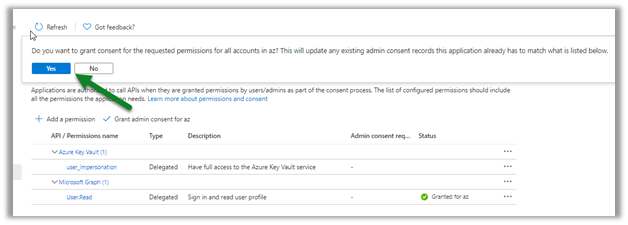

This was because Allow public client flows was disabled for the application registered in Azure AD.

Enabling it fixed the issues for us.

More on ROPC

Hope it helps..

Get all the details here

Get all the details here