How to – Access Dataverse environment storage using SAS Token

We can access our environment’s storage using a SAS token. Download – Azure Storage Explorer – https://azure.microsoft.com/features/storage-explorer/#overview Select Connect to Azure resources option Select ADLS Gen2 container or directory for the Azure storage type. Select the Shared access signature URL for the connection To get the SAS URL – https://%5BContainerURL%5D/CDS?%5BSASToken%5D, we need ContainerURL and SASToken.…

Fixed – Azure Synapse Link for Dataverse not starting

Recently while trying to configure “Azure Synapse Link forDataverse” for one of our environments, we faced an issue, where it got stuck as shown below. Also, there were no files/folders created inside the Storage Account. https://nishantrana.me/2020/12/10/posts-on-azure-data-lake/ The accounts were properly setup – https://docs.microsoft.com/en-us/power-apps/maker/data-platform/azure-synapse-link-synapse#prerequisites Here we had only selected contact table for sync initially, and it…

Fixed – Authorization failed. The client with object id does not have authorization to perform action ‘Microsoft.Authorization/roleAssignments/write’ over scope ‘/subcriptions’ while configuring Azure Synapse Link for Dataverse

Recently while configuring Azure Synapse Link for Dataverse, for exporting data to Azure Data Lake we got the below error – {“code”:”AuthorizationFailed”,”message”:”The client ‘abc’ with object id ‘d56d5fbb-0d46-4814-afaa-e429e5f252c8’ does not have authorization to perform action ‘Microsoft.Authorization/roleAssignments/write’ over scope ‘/subscriptions/30ed4d5c-4377-4df1-a341-8f801a7943ad/resourceGroups/RG/providers/Microsoft.Storage/storageAccounts/saazuredatalakecrm/providers/Microsoft.Authorization/roleAssignments/2eb81813-3b38-4b2e-bc14-f649263b5fcf’ or the scope is invalid. If access was recently granted, please refresh your credentials.”} As well…

How to – Export Dataverse (Dynamics 365) data to Azure SQL using Azure Data Factory pipeline template

[Visual Guide to Azure Data Factory – https://acloudguru.com/blog/engineering/a-visual-guide-to-azure-data-factory%5D Using the new Azure Data Factory pipeline template – Copy Dataverse data from Azure Data Lake to Azure SQL – we can now easily export the Dataverse data to Azure SQL Database. https://docs.microsoft.com/en-us/power-platform-release-plan/2021wave1/data-platform/export-dataverse-data-azure-sql-database Check other posts on Azure Data Factory Select Pipeline from template option inside the…

Fixed – AuthorizationFailed. The client with object id does not have authorization to perform action ‘’Microsoft.Authorization/roleAssignments/write’ over scope ‘storageaccount’ – Azure Data Lake

While configuring the Azure Synapse Link/ Export to Data Lake service, we were getting below error for one of the users. {“code”:”AuthorizationFailed”,”message”:”The client ‘nishantr@pmaurua105.onmicrosoft.com’ with object id ‘d56d5fbb-0d46-4814-afaa-e429e5f252c8’ does not have authorization to perform action ‘Microsoft.Authorization/roleAssignments/write’ over scope ‘/subscriptions/30ed4d5c-4377-4df1-a341-8f801a7943ad/resourceGroups/RG/providers/Microsoft.Storage/storageAccounts/saazuredatalakecrm/providers/Microsoft.Authorization/roleAssignments/2eb81813-3b38-4b2e-bc14-f649263b5fcf’ or the scope is invalid. If access was recently granted, please refresh your credentials.”} The current…

How to setup – Azure Synapse Link – Microsoft Dataverse

Azure Synapse Link (earlier known as Export to Data Lake Service) provides seamless integration of DataVerse with Azure Synapse Analytics, thus making it easy for users to do ad-hoc analysis using the familiar T-SQL with Synapse Studio, build Power BI Reports using Azure Synapse Analytics Connector or use Azure Spark in Azure Synapse for analytics.…

Azure Synapse Link / Export to Data Lake Service – Performance (initial sync)

Recently we configured the Export to Data Lake service for one of our projects. Just sharing the performance, we got during the initial sync. Entity Count Contact 2,36,2581 Custom entity 1,61,3554 The sync started at 11:47 A.M and was completed around 4:50 P.M. – around 5 hours i.e. 300 minutes Let us consider total records…

Advanced configuration settings – Azure Synapse Link / Export to Data Lake service (Dataverse/ Dynamics 365)

The Export to Data Lake service now has some Advanced configuration settings available. To learn more on Export to Data Lake service https://nishantrana.me/2020/12/10/posts-on-azure-data-lake/ The new settings allow us to configure how the DataVerse / CRM table data is written to Azure Data Lake. In-Place update or upsert (default) Append Only With the in-place update, the…

Fixed – Initial sync status – Not Started – Azure Synapse Link / Export to Data Lake

Recently while configuring the Export to Data Lake service, we observed the initial sync status being stuck for one of the tables at Not started. Manage tables option also was not working All changes for existing tables are temporarily paused when we are in the process of exporting data for new table(s). We will resume…

DSF Error: CRM Organization cannot be found while configuring Azure Synapse Link / Export to Data Lake service in Power Platform

Recently while trying to configure the Export to Data Lake service from the Power Apps maker portal, we got the below error. DSF Error: CRM Organization <Instance ID> cannot be found. More on configuring Export to Data Lake service – https://nishantrana.me/2020/12/10/posts-on-azure-data-lake/ The user through which we were configuring had all the appropriate rights. https://docs.microsoft.com/en-us/powerapps/maker/data-platform/export-to-data-lake#prerequisites The…

How to – Migrate Dataverse environment to a different location within the same Datacentre region – Power Platform

When we create an environment in the Power Platform admin center, we get the option of specifying the datacenter region, but not the location within it. Find the Data Center Region / Location of your Dataverse Environment- https://nishantrana.me/2021/04/27/finding-the-datacenter-region-location-of-the-microsoft-dataverse-environment/ E.g. we have specified Region as the United Arab Emirates. Now within the UAE region, we had…

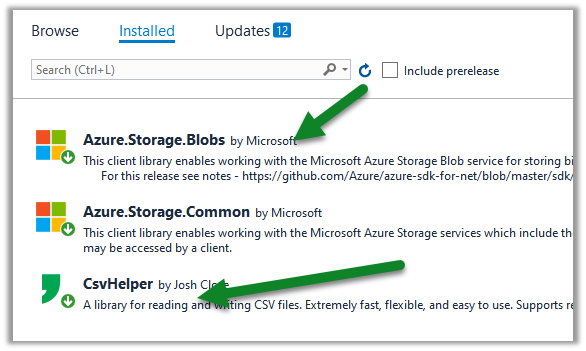

Azure Data Lake Storage Component in KingswaySoft – SSIS

Download and install the SSIS Productivity Pack https://www.kingswaysoft.com/products/ssis-productivity-pack/download/ Drag the Azure Data Lake Storage Source component in the data flow Double click and click on New to specify the connection Provide the connection details and test the connection It supports both Gen 1 and Gen 2 Supports the below Authentication modes Inside the Azure Data…

Use query acceleration to retrieve data from Azure Data Lake Storage

Few key points about query acceleration – Query acceleration supports ANSI SQL like language, to retrieve only the required subset of the data from the storage account, reducing network latency and compute cost. Query acceleration requests can process only one file, thus joins and group by aggregates aren’t supported. Query acceleration supports both Data Lake…

Upcoming updates to Azure Synapse Link / Export to Data Lake Service – 2020 Release Wave 2

As we know – Export to Data Lake service enables continues replication of CDS entity data to Azure Data Lake Gen 2 Below are some the interesting update coming to it – Configurable Snapshot Interval – currently the snapshots are created hourly– which will be configurable. Cross Tenant Support – Currently Azure Data Lake Gen2…

Snapshot in Azure Data Lake (Dynamics 365 / CDS) – Azure Synapse Link

In the previous post, we saw how to export CDS data to Azure Data Lake Gen 2 https://nishantrana.me/2020/09/07/export-data-from-common-data-service-to-azure-data-lake-storage-gen2/ Here let us have a look how the sync and snapshot work. We have already done the configuration and have synced the Account and Contact entity. As the diagram depicts – there is initial sync followed by…

Use Power BI to analyze the CDS data in Azure Data Lake Storage Gen2

In the previous post, we saw how to export CDS data to Azure Data Lake Storage Gen2. Here we’d see how to write Power BI reports using that data. Open the Power BI Desktop, and click on Get data Select Azure > Azure Data Lake Gen 2 and click on connect. To get the container…

Error – We don’t support the option ‘HierarchicalNavigation’. Parameter name: HierarchicalNavigation when trying to load table in Power BI Desktop using Azure Data Lake Storage Gen 2 CDM Folder view (beta)

While trying to connect to a table within Azure Data Lake Storage Gen2 through CDS Folder View we got the below error Users have reported this issue with the August 2020 Update of Power BI Desktop. As suggested in the forums, downgrading to June 2020 Update fixed the issue for us. Check out Export CDS data…

Fixe – Error – Access to the resource is forbidden while trying to connect to Azure Data Lake Storage Gen2 using Power BI Desktop

While trying to connect to Azure Data Lake Storage Gen2 through Power BI Desktop we got the below error Came as surprise cause the user was had the owner role assigned to the container It turned out we need to assign the Storage Blob Data Reader role to the user. After assigning the role we…

How to – Use Azure Synapse Link – Export data from Common Data Service to Azure Data Lake Storage Gen2

Azure Data lake store gen 2 can be described as a large repository of data, structured or unstructured built on top of Azure Blob storage, that is secure (encryption – data at rest), manageable, scalable, cost-effective, easy to integrate with. Also check out – setup Azure Synapse with Dataverse – https://nishantrana.me/2021/06/16/how-to-setup-azure-synapse-link-microsoft-dataverse/ Export to Data Lake…